Let’s look at another simple example of data modelling.

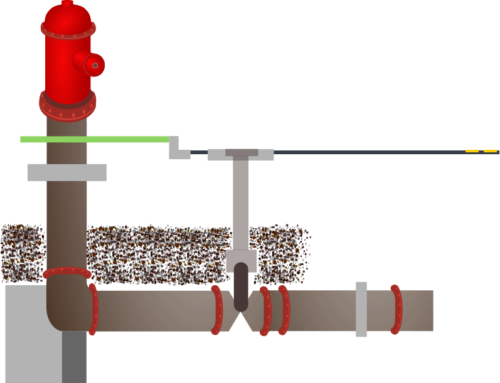

EPANET is a public domain software package which models water distribution systems. Written by the Water Supply and Water Resources Division of the US Environment Protection Agency (EPA), it is available as either an interactive network editing program, or a toolkit that allows the analysis engine to be embedded in other applications. Many other water distribution applications embed the toolkit in this way. (If you want more information, refer to Wikipedia.)

This data modelling case study will look at the EPANET Toolkit, and specifically at the input data file, which uses a simple text format to describe the network being modelled. The EPANET Toolkit reads the input file, performs the necessary analysis and writes the results of the simulation to output file(s).

Modelling Goals

Just imagine that we want to store the contents of input data files in a database. As with many modelling tasks, this can be undertaken at more than one level.

Simplistic Modelling

A data file could be modelled with no ‘intelligence’ at all. A single table with two text columns could store the name/path of the file in one column and the contents of the file in the other.

Table: DATA_FILE

| Column Name | Description |

|---|---|

| FILENAME | Name of file. |

| CONTENTS | Contents of file. |

What is wrong with this level of modelling? Reading the file and saving the contents in a database is very easy, so no problems there. However, the value of stored data is in abstraction – the ideas or information which the modelling has made it easier to access. This very high level of modelling gives us almost no advantages. We could use this table for storing EPANET input data files or lists of the CDs we have at home. Our modelling simply tells us that we have a file which contains something.

If our file has been modified, we must read the file again and save it. We have no idea what has changed and no idea whether the contents of the file are useful or not.

Since we know that our data files contain information stored in lines, we could also model our data file so that we know the contents of each line.

Table: DATA_FILE

| Column Name | Description |

|---|---|

| FILENAME | Name of file. |

| LINENUM | Line number. |

| CONTENTS | Contents of file. |

Does this give us any advantage? If a file is modified, we can easily tell whether a specific line is the same or different, but it offers little other advantage. In fact, it makes reading the file more fiddly, as now we must order our fetched rows, and updating a file may require us to write many rows even if we have only inserted one new line.

Useful abstractions

Our modelling is only useful if the information we store is information we need. Storing information about the file itself is not our goal. What we want is the information the file contains: the network information.

Water supply networks are made up of pipes and pumps, valves and reservoirs, and the sorts of queries we might want to answer for a data file are:

- Who created this network model?

- How many reservoirs supply the network?

- How many valves of greater than 150mm diameter are in the network?

- What is the total length of piping in the network?

Our modelling is only useful if it allows us to answer the sorts of questions that are important.

Since most of our questions will relate to the network described by the input file, our modelling must include network information. Here, we are helped by the input file format we are wanting to model, as it has already defined much of the abstraction we need. Looking closely at the input file format will show us how to proceed.

Input file format

The EPANET Toolkit works with an input text file that describes the pipe network being analysed.

A maximum of 255 characters can appear on a line. Blank lines can appear anywhere in the file, and the semicolon (;) can be used to indicate that what follows on the line is a comment, not data. The file is organised by sections, where each section begins with a keyword enclosed in square brackets. The various keywords are listed in the table below.

| Network Components | System Operation | Water Quality | Options & Reporting |

|---|---|---|---|

| [TITLE] | [CURVES] | [QUALITY] | [OPTIONS] |

| [JUNCTIONS] | [PATTERNS] | [REACTIONS] | [TIMES] |

| [RESERVOIRS] | [ENERGY] | [SOURCES] | [REPORT] |

| [TANKS] | [STATUS] | [MIXING] | |

| [PIPES] | [CONTROLS] | ||

| [PUMPS] | [RULES] | ||

| [VALVES] | [DEMANDS] | ||

| [EMITTERS] |

Each section can contain one or more lines of data.

The next post will start to look at these sections to see what lessons we can learn about data modelling.

Leave A Comment